NVIDIA Announces New AI Performance Optimizations and Integrations for Windows

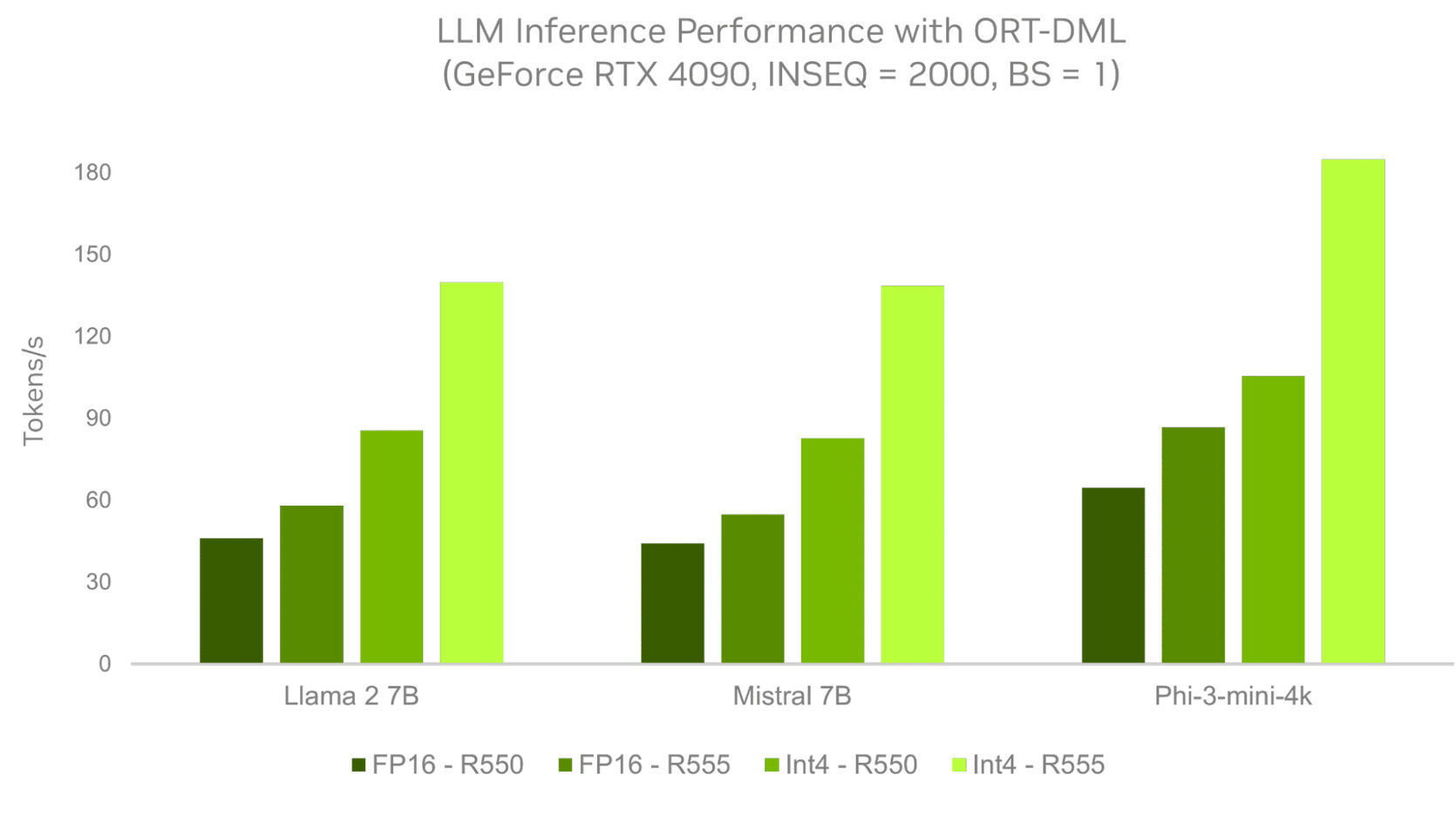

NVIDIA has announced new AI performance optimizations and integrations for Windows at Microsoft Build. These enhancements aim to deliver maximum performance on NVIDIA GeForce RTX AI PCs and NVIDIA RTX workstations. Large language models (LLMs) that power generative AI use cases can now run up to 3x faster with ONNX Runtime (ORT) and DirectML using the new NVIDIA R555 Game Ready Driver. ORT and DirectML are high-performance tools used to run AI models locally on Windows PCs.

WebNN, an application programming interface for web developers to deploy AI models, is now accelerated with RTX via DirectML. This enables web apps to incorporate fast, AI-powered capabilities. Additionally, PyTorch will support DirectML execution backends, allowing Windows developers to train and infer complex AI models on Windows natively. NVIDIA and Microsoft are collaborating to scale performance on RTX GPUs, building on NVIDIA's leading AI platform that accelerates over 500 applications and games on more than 100 million RTX AI PCs and workstations worldwide.

RTX AI PCs - Enhanced AI for Gamers, Creators and Developers

NVIDIA introduced the first PC GPUs with dedicated AI acceleration, the GeForce RTX 20 Series with Tensor Cores, in 2018. Its latest GPUs offer up to 1,300 trillion operations per second of dedicated AI performance. In the coming months, Copilot+ PCs equipped with new power-efficient systems-on-a-chip and RTX GPUs will be released, providing increased performance for gamers, creators, enthusiasts, and developers to tackle demanding local AI workloads.

For gamers on RTX AI PCs, NVIDIA DLSS boosts frame rates by up to 4x, while NVIDIA ACE brings game characters to life with AI-driven dialogue, animation, and speech. Content creators benefit from RTX-powered AI-assisted production workflows in apps like Adobe Premiere, Blackmagic Design DaVinci Resolve, and Blender. Game modders can use NVIDIA RTX Remix to create RTX remasters of classic PC games, while livestreamers can enhance their streams with NVIDIA Broadcast and RTX Video.

LLMs powered by RTX GPUs execute AI assistants and copilots faster, enhancing productivity. Developers can build and fine-tune AI models directly on their devices using NVIDIA's AI developer tools, including NVIDIA AI Workbench, NVIDIA cuDNN, and CUDA on Windows Subsystem for Linux.

Faster LLMs and New Capabilities for Web Developers

Microsoft recently released the generative AI extension for ORT, adding support for optimization techniques like quantization for LLMs. ORT with the DirectML backend offers Windows AI developers a quick path to develop AI capabilities. NVIDIA optimizations for the generative AI extension for ORT, available in R555 Game Ready, Studio, and NVIDIA RTX Enterprise Drivers, provide up to 3x faster performance on RTX compared to previous drivers.

WebNN, now available in developer preview, uses DirectML and ORT Web to accelerate deep learning models with on-device AI accelerators. Popular models like Stable Diffusion, SD Turbo, and Whisper run up to 4x faster on WebNN compared to WebGPU. Developers can learn more about developing on RTX at the Accelerating development on Windows PCs with RTX AI session at Microsoft Build.